Hear that? It’s the sound of Mac fans. No, not your shiny new M1 Mac’s fans—chances are, you’ll never hear those—but rather, the sound of excitement rippling through the Mac community. This is something big. Really big. Now, I’m only 33, but someday when I go full fuddy-duddy I will speak of this: the great Intel/Apple Silicon transition. The beginning of a new era at Apple.

All that sounds dramatic, of course, but it’s interesting to trace all of the different paths that led us to this point. The A-Series chips, the introduction of Metal, rapid machine learning gains, the gradually degrading repairability scores as components became more integrated, the Secure Enclave, a new super fast emulation layer, new unified memory architecture, and 5nm process… years and years of work have now come to fruition with the first Apple Silicon chips for Mac. And our minds are blown.

Suddenly, we’re handed a thin, entry-level fanless laptop that performs better than almost every other Mac computer out there, and a low-end MacBook Pro and Mac Mini that make current Mac Pro owners sweat and clutch their wheels. So many questions abound. What new hardware designs will these gains make possible? What on earth does Apple have in store for its high-end Macs? Will anyone else even be able to compete? It’s an exciting time to be a Mac lover, but, surprise: this post isn’t really about the Mac. It’s about the iPad.

There’s no question that Apple has struggled to craft a cohesive, compelling narrative for the iPad. For a long time, there seemed to be a distinct lack of product vision. Everyone likes to speculate over what role Steve Jobs ultimately intended the iPad to have in people’s lives, but not only is that pointless, it’s also irrelevant. We don’t need Steve to tell us what the iPad is good for. We know what it’s good for, and we can easily imagine what it could be good for, if only Apple would set it free.

Just as Apple left us with great expectations for its Pro Mac line-up, the latest iPad Air also raises the bar in new and interesting ways. The Air served as sort of an appetizer for the new M1 chips, while also receiving a generous trickle-down of features from the iPad Pro, including USB-C and support for the latest keyboard and Pencil accessories. There have been rumors of new mini-LED displays for the next-gen iPad Pros, but it’s going to take a lot more than new display tech to set the Pros apart.

Francisco Tolmasky (@tolmasky) recently tweeted:

“A sad but inescapable conclusion from the impressive launch of the M1 is just how much Apple squandered the potential of the iPad. The iPad has had amazing performance for awhile, so why is the M1 a game changer? Because it’s finally in a machine we can actually do things on.”

Francisco is right: Power and performance aren’t the bottleneck for iPad, and haven’t been for some time. So if raw power isn’t enough, and new display tech isn’t enough, where does the iPad go from here? Will it be abandoned once more, lagging behind the Mac in terms of innovation, or will Apple continue to debut its latest tech in this form factor? Is it headed toward functional parity with the Mac or will it always be hamstrung by Apple’s strict App Store policies and seemingly inconsistent investment in iPadOS?

It’s clear that Apple wants the iPad Pro to be a device that a wide variety of professionals can use to get work done. And since so many people use web apps for their work, the introduction of “desktop” Safari for iPad was an important step toward that goal. The Magic Keyboard and trackpad was another step.

Here are ten more steps I believe Apple could and should take to help nudge the iPad into this exciting next era of computing.

- Give the iPad Pro another port. Two USB 4.0 ports would be lovely.

- Adopt a landscape-first mindset. Rotate the Apple logo on the back and move the iPad’s front-facing camera on the side beneath the Apple Pencil charger to better reflect how most people actually use their iPad Pros.

- Introduce Gatekeeper and app notarization for iOS. The process of side-loading apps should not be as simple as downloading them from the App Store. Bury it in Settings, make it slightly convoluted, whatever: just have an officially-sanctioned way of doing it.

- Ruthlessly purge the App Store Guidelines of anything that prevents the iPad from serving as a development machine. Every kind of development from web to games should be possible on an iPad. And speaking of games—emulators should be allowed, too.

- Release a suite of professional first-party apps at premium prices. If someone can edit 4K videos in Final Cut on their M1 MacBook Air, they should be able to edit 4K videos in Final Cut on their iPad Pro. I refuse to believe that these pro apps can’t be re-imagined and optimized for a touch experience. If Apple leads the way in developing premium software for iPad, others will follow.

- Make it possible to write, release, and install plug-ins (if appropriate) for the aforementioned first party apps.

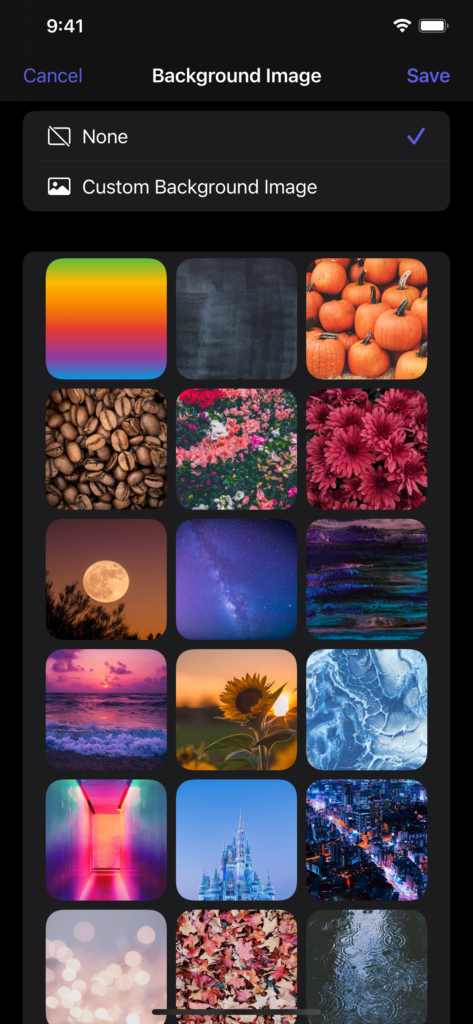

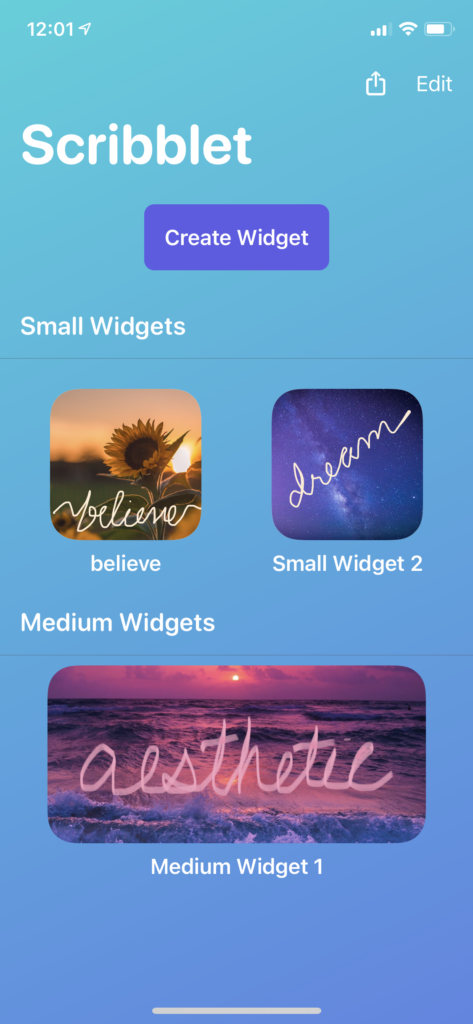

- Bring App Library to the iPad and allow widgets to be positioned anywhere on the Home Screen. This isn’t groundbreaking, it just annoys the heck out of me.

- Release a new keyboard + trackpad case accessory that allows the iPad to be used in tablet mode without removing it from the case.

- Introduce Time Machine backups for iPadOS.

- 5G, ofc.

In the end, fostering a vibrant community of iPad app developers can only stand to benefit the Mac (and vice-versa).

It’s simple: people love their iPads. They love them so much they wish they could do even more with them. The new M1 Macs should give iPad fans reason to be excited; now that we’ve seen hints of what future Macs can be, it’s time for the iPad to reassert itself—to remind us once again who it’s for, and what makes it special.

In other words: Your move, iPad.